SketchLattice: Latticed Representation for Sketch Manipulation

Yonggang Qi 1* Guoyao Su1* Pinaki Nath Chowdhury2 Mingkang Li1 Yi-Zhe Song2

1Beijing University of Posts and Telecommunications, CN 2SketchX, CVSSP, University of Surrey, UK

ICCV 2021

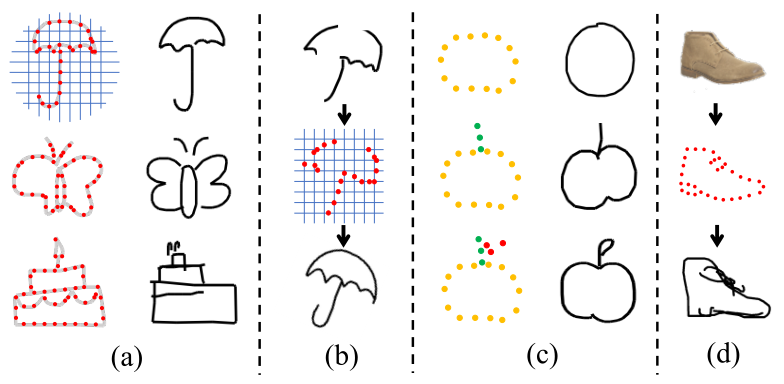

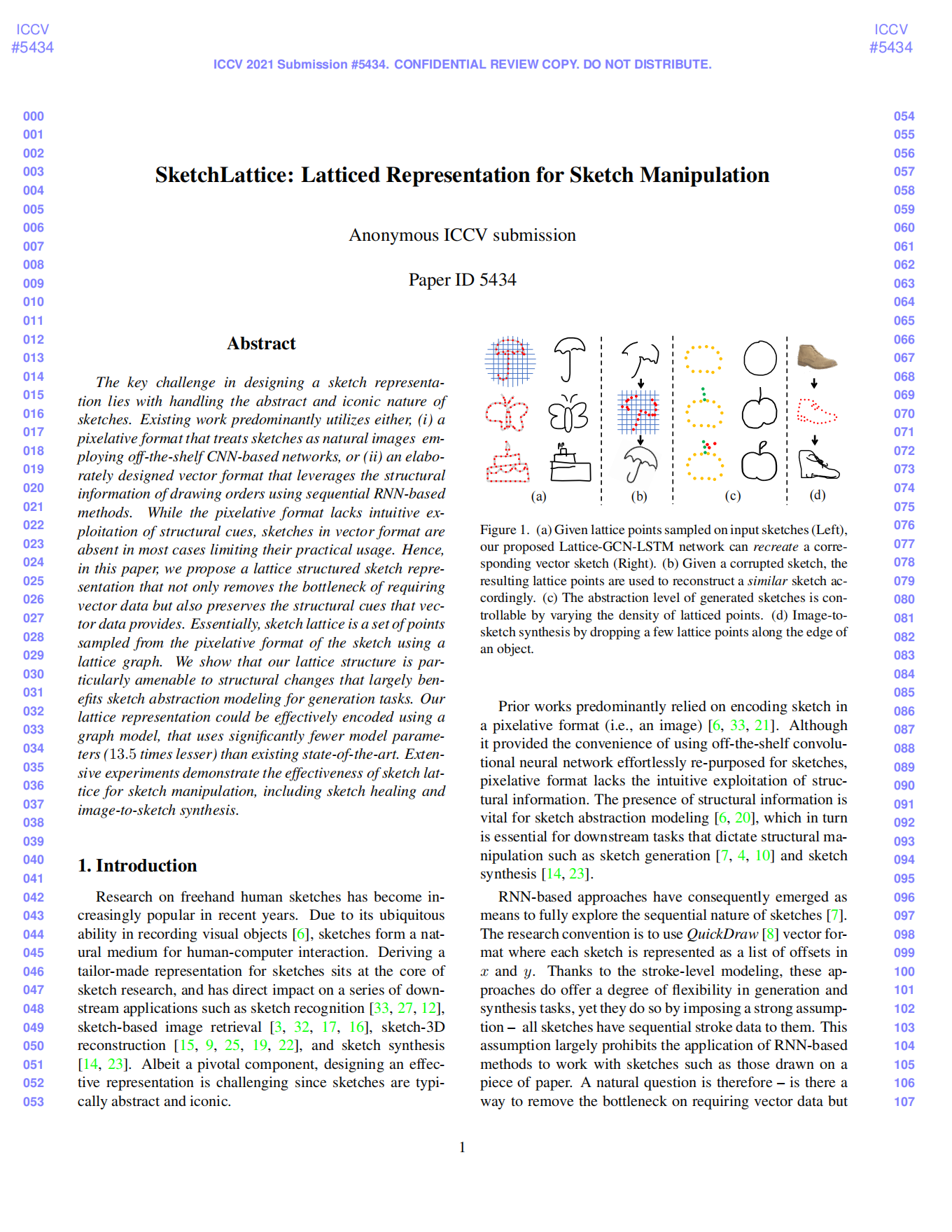

Figure 1. (a) Given lattice points sampled on input sketches (Left), our proposed Lattice-GCN-LSTM network can recreatea corresponding vector sketch (Right). (b) Given a corrupted sketch, the resulting lattice points are used to reconstruct a similar sketch accordingly. (c) The abstraction level of generated sketches is controllable by varying the density of latticed points. (d) Image-to-sketch synthesis by dropping a few lattice points along the edge of an object.

Introduction

The key challenge in designing a sketch representation lies with handling the abstract and iconic nature of sketches. Existing work predominantly utilizes either , (i) a pixelative format that treats sketches as natural images employing off-the-shelf CNN-based networks, or (ii) an elaborately designed vector format that leverages the structural information of drawing orders using sequential RNN-based methods. While the pixelative format lacks intuitive exploitation of structural cues, sketches in vector format are absent in most cases limiting their practical usage. Hence, in this paper , we propose a lattice structured sketch representation that not only removes the bottleneck of requiring vector data but also preserves the structural cues that vector data provides. Essentially, sketch lattice is a set of points sampled from the pixelative format of the sketch using a lattice graph. We show that our lattice structure is particularly amenable to structural changes that largely benefits sketch abstraction modeling for generation tasks. Our lattice representation could be effectively encoded using a graph model, that uses significantly fewer model parameters (13.5 times lesser) than existing state-of-the-art. Extensive experiments demonstrate the effectiveness of sketch lattice for sketch manipulation, including sketch healing and image-to-sketch synthesis.

Our Solution

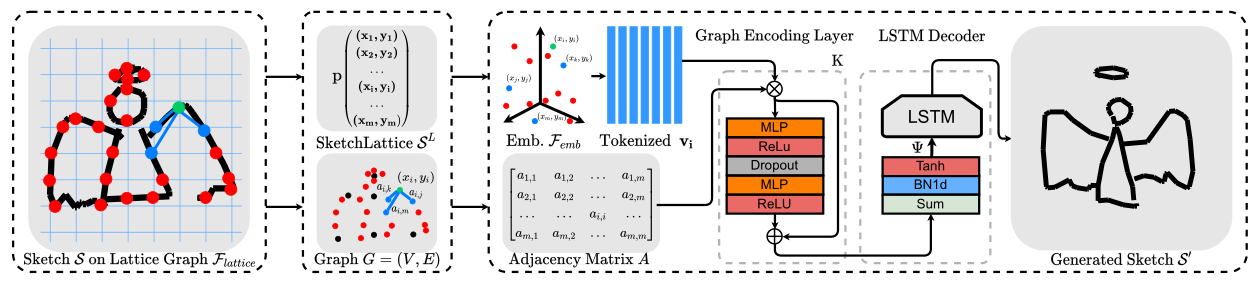

Figure 2. Framework overview.

As shown in Figure 2, a schematic representation of Lattice-GCN-LSTM architecture. An input sketch image or the edge map of an image object is given to our lattice graph to sample lattice points. All overlapping points between the dark pixel in sketch map and uniformly spread lines in lattice graph are sampled. Given the lattice points, we construct a graph using proximity principles. A graph model is used to encode SketchLattice into a latent vector. Finally, a generative LSTM decoder recreates a vector sketch which resembles the original sketch image.

Experiments and Results

Sketch Healing

The task of sketch healing was proposed akin to vector sketch synthesis. Specifically, given a partial sketch drawing, the objective is to recreate a sketch which can best resemble the partial sketch.

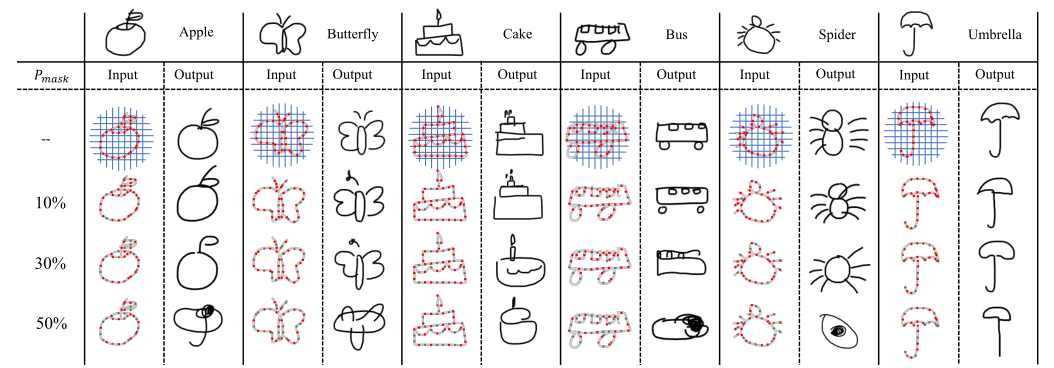

Figure 3. Qualitative results.

Exemplary results of generated sketch from SketchLattice under different corruption level of mask probability Pmask in Quick-Draw dataset. With an increase of Pmask, the generated sketch becomes more abstract. For Pmask ≤ 30% we observe satisfactory generated sketches, but for Pmask = 50%, the generated new sketches are struggle to faithfully recover the original sketch.

| Method | VF | VC | #Params | Pmask | Acc | Top-1 |

|---|---|---|---|---|---|---|

| SR | √ | × | 0.67M | 10% | 25.08% | 50.65% |

| 30% | 3.44% | 43.48% | ||||

| Sp2s | × | √ | 1.36M | 10% | 24.26% | 45.20% |

| 30% | 10.54% | 27.66% | ||||

| SH | √ | √ | 1.10M | 10% | 50.78% | 85.74% |

| 30% | 43.26% | 85.47% | ||||

| SH-VC | × | √ | 1.10M | 10% | - | 58.48% |

| 30% | - | 50.87% | ||||

| Ours | × | × | 0.08M | 10% | 55.50% | 76.02% |

| 30% | 54.79% | 73.71% |

Table 1. Quantitative results

We can observe from Table1 that our approach outperforms other baseline methods on recognition accuracy, suggesting that the healed sketches obtained from ours are more likely to be recognized as objects in the correct categories. Importantly, we can also observe that, unlike other competitors which are very sensitive to the corruption level, ours can maintain a stable recognition accuracy even when Pmask increases up to 30%.

Image-to-Sketch Synthesis

Our Lattice-GCN-LSTM network can be applied to image-to-sketch translation. Once trained, for any input image, we can obtain some representative lattice points based on the corresponding edges and lattice graph.

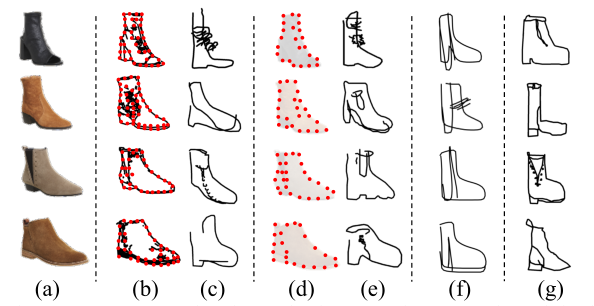

Figure 4. Image-to-sketch synthesis examples.

As shown in Figure 4, (a) The original photos from Shoes-V2 dataset. (b) The lattice points on edge of the photo shoes. (c) Sketches generated by our model. (d) The points introduced by human referred to the photos. (e) Sketches given by our model using lattice points shown in (d). (f) Sketches generated by LS-SCC for comparison. (g) Human drawn sketches according to the photos.

Bibtex

If this work is useful for you, please cite it:

@inproceedings{yonggang2021sketchlattice,

title={SketchLattice: Latticed Representation for Sketch Manipulation},

author={Yonggang Qi, Guoyao Su, Pinaki Nath Chowdhury, Mingkang Li, Yi-Zhe Song},

booktitle={ICCV},

year={2021}

}

Created by Fengyin Lin @ BUPT

2021.8

arXiv

arXiv Code

Code